About This Article

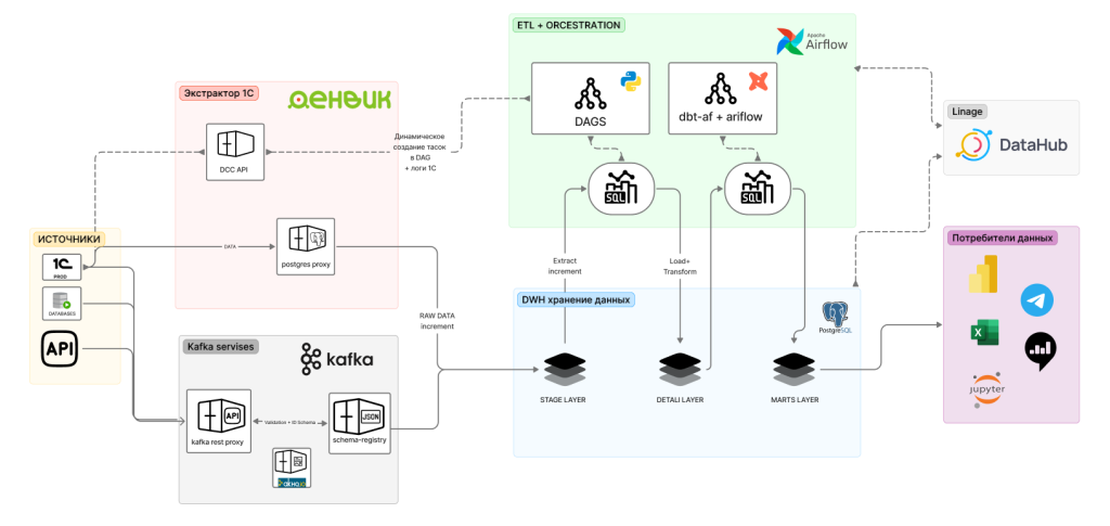

Aleksandr Mazalov (Senior Data Engineer & Data Architect) shares a real-world case of migrating from a chaotic architecture built on 1C and KNIME to a modern incremental DWH on Postgres, Airflow, and dbt.

The Problem

The legacy architecture copied the entire 1C database to MS SQL Server daily — a 3-4 hour process that frequently crashed. KNIME required 40 GB of RAM, SQL scripts were overloaded with JOINs, and data lagged two days behind reality. The result: distrust in numbers, manual verification, and inconsistent metrics across teams.

Key Ideas

Stage Layer — The “1C to BI Extractor” tool exports only deltas (new and changed records), tracking even retroactive corrections in 1C. Minutes instead of hours.

DDS Layer (Airflow + PostgreSQL) — A single DAG manages updates to ~50 tables: unique IDs, data cleansing, and SCD2 for history tracking. CI/CD via GitLab with a two-tier environment (TEST in Docker, PROD).

Stock Processing (Kafka + Partitions) — 300 million rows with retroactive changes and hourly update requirements. Kafka via Confluent buffers peak loads, while two Airflow DAGs process data in batches with dynamic date-based partitioning.

Data Marts (dbt-core + dbt-af) — Domain-structured models, DRY principle via macros, automatic DAG generation through dbt-af. Analysts independently develop data marts with data engineer review.

Results

Before: 2-day-old data, 40 GB RAM for full recalculation, days to build a new mart, manual verification, heavy load on 1C. After: hourly updates, efficient resource usage, 24 hours for a new mart, automated dbt tests, minimal load on 1C.

Why It Matters

This case study demonstrates how Airflow + dbt with proper engineering discipline transforms chaos into a manageable data platform. The tech stack — PostgreSQL, Apache Airflow, dbt-core, dbt-af, Kafka, GitLab CI/CD, Docker — is entirely open-source and reproducible.