About This Talk

At DoubleCloud 2022, I shared the experience of building a modern analytical infrastructure at Toloka AI using entirely open-source components. The talk covered the architecture decisions, operational lessons, and practical trade-offs of choosing open-source over managed services.

Key Ideas

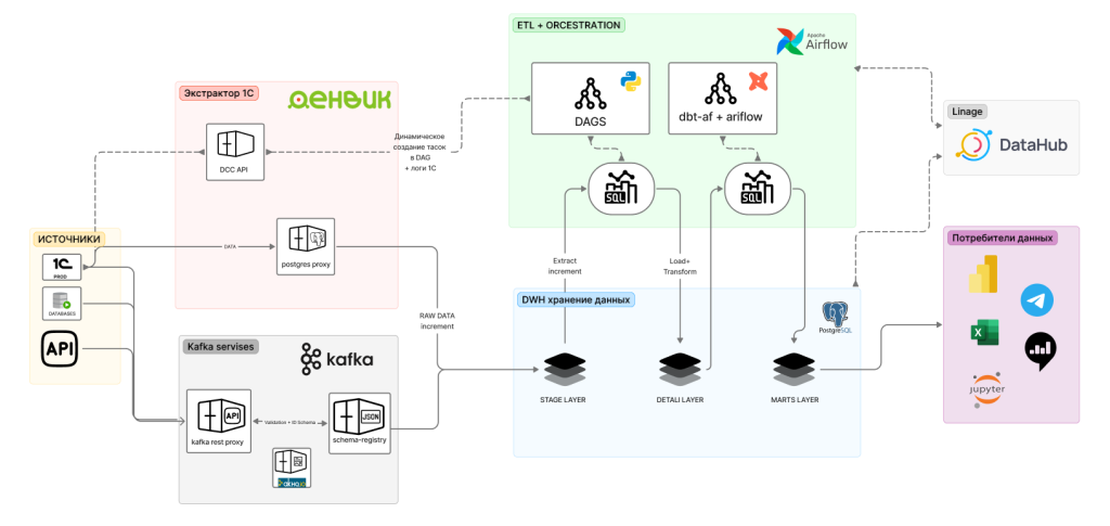

The Open-Source Data Stack — The architecture centered on Kafka for data ingestion and event streaming, ClickHouse for analytical storage and fast queries, and custom orchestration connecting the components. Each tool was chosen for its specific strengths rather than adopting a single vendor’s ecosystem.

ClickHouse in Production — Running ClickHouse at scale requires attention to cluster topology, replication strategy, sharding scheme, and materialized view design. The talk covered practical lessons: how we designed our table schemas, managed data lifecycle, and used materialized views for real-time aggregation without impacting query performance.

Kafka as the Data Backbone — Kafka served as the central nervous system of the data platform. We covered topic design principles, partitioning strategies for parallelism, consumer group management, and the Kafka-ClickHouse integration via the Kafka table engine — enabling near-real-time data availability.

Operational Maturity — Building the stack was the easy part. The talk dedicated significant time to operational concerns: monitoring strategies, alerting configurations, capacity planning, schema evolution processes, and the organizational practices that keep an open-source stack running reliably.

Why It Matters

The modern data stack doesn’t have to mean expensive SaaS tools. With the right engineering investment, open-source technologies like ClickHouse and Kafka can deliver enterprise-grade analytics capabilities at a fraction of the cost — while providing full control over your data infrastructure.